Why AI needs a Proof-of-Concept to Production pipeline

Organisations that treat AI as a series of disconnected experiments will end up with expensive demos, shadow IT and AI, and mounting risk rather than real business value.

Most organisations are already running AI pilots and proofs-of-concept (PoC), often in innovation labs or with enthusiastic teams experimenting with new tools. Without a defined pathway into production, these efforts stall at “interesting prototype” and never make it into the core processes where value is actually realised. It has been estimated (in mid-2025) that 70% of AI projects fail to get from PoC into production:

Over 70% of AI projects fail to move from pilot to production.

Nearly 88% of AI POCs are abandoned and never fully deployed.

AI project failure rates are nearly double those of traditional IT projects.

A structured pipeline can help to turn AI work from ad‑hoc heroics into a repeatable capability. It forces clarity about the problem, the data, the risks, and the change management needed so that AI systems can be safely scaled and supported over time.

From idea to impact: key stages

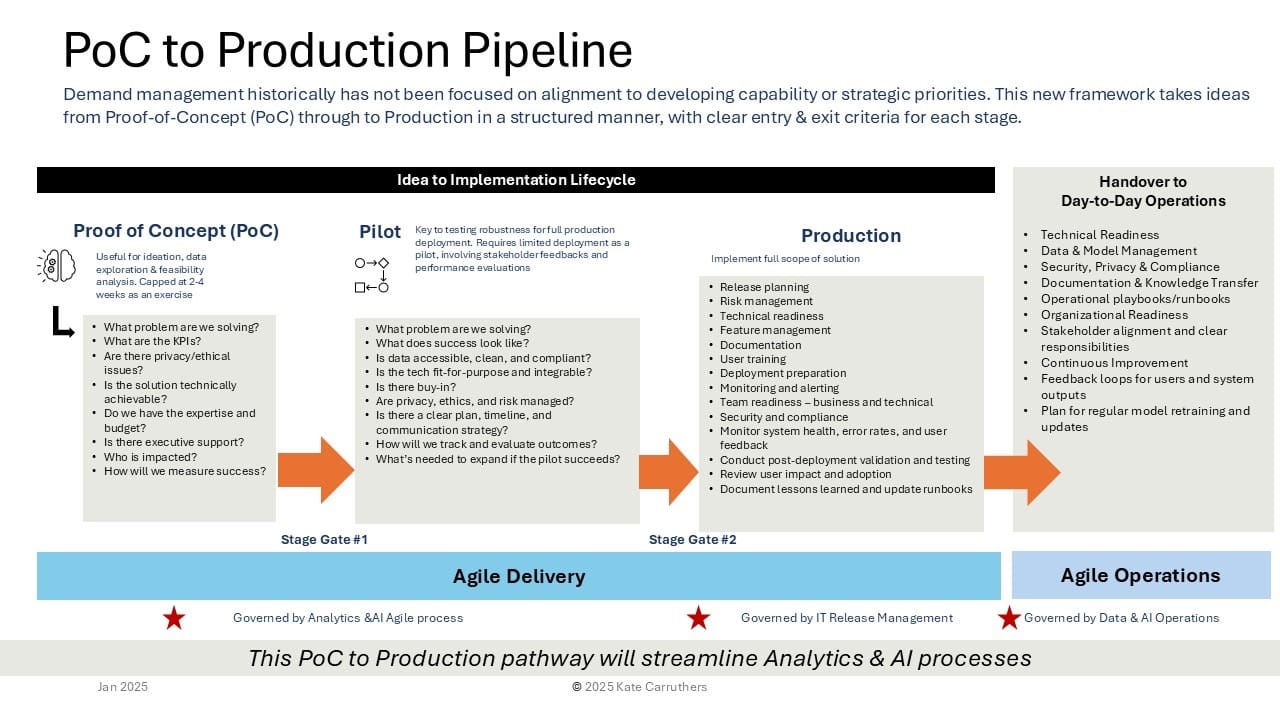

This diagram, that I have developed to explain this concept to my students, lays out a lifecycle that is increasingly becoming a de‑facto pattern across mature AI teams:

Proof of Concept → Pilot → Production → Handover to Operations.

- Proof of Concept (PoC): A bounded exercise (often a few weeks) to test technical feasibility and explore whether AI can plausibly solve the problem, with clear hypotheses and success criteria. This is where you surface basic data issues, privacy questions, and whether there is meaningful business value at all.

- Pilot: A limited real‑world deployment to validate performance, usability, and value with actual users and live data. Here you start to treat the solution as a product: you test robustness, refine KPIs, and check readiness for scale including operational, ethical, and risk considerations.

- Production: Implementation of the full‑scope solution with proper release planning, documentation, monitoring, and user training. This is where MLOps and LLMOps disciplines come to the fore: CI/CD for models and prompts, environment separation, observability, and rollback strategies.

- Handover to operations: Transition from project mode to business‑as‑usual, including data and model management, governance, and continuous improvement. This stage ensures that models are retrained, risks are monitored, and feedback loops from users and system telemetry actually drive iteration.

This pipeline deliberately inserts stage gates between PoC, pilot, and production so that leaders can make evidence‑based decisions about whether to stop, pivot, or scale.

What goes wrong without a pipeline?

When organisations skip this structured pathway, the failure modes are predictable.

- Pilot purgatory: AI initiatives stay trapped as endless experiments because there is no defined route, budget, or ownership to take them into production. Teams get disillusioned and executives start to see AI as hype rather than capability.

- Shadow AI and unmanaged risk: Individual teams deploy models and gen‑AI workflows directly into their operational processes without central oversight, leading to inconsistent controls, duplicated effort, and governance blind spots. This is particularly dangerous where AI decisions touch customers, safety, or regulated domains.

- Operational fragility: Models are treated as one‑off projects rather than living systems that require monitoring, retraining, and incident management. Without robust pipelines and observability, performance quietly degrades as data drifts, and the organisation often discovers issues only when something fails in production.

- Missed learning and reuse: Each team reinvents environment setup, data pipelines, and evaluation frameworks because there is no shared pattern for moving from PoC to production. The result is higher cost, slower delivery, and fragmented institutional knowledge.

Designing a robust PoC‑to‑Prod pathway

For senior leaders, the goal is not just to have a few successful AI projects, but to build an organisational capability that can repeatedly take ideas from concept to production safely and at pace.

Some practical design principles:

- Clarify the “why” up front: Every PoC should start with a well‑articulated problem, target outcomes, and measurable success metrics, linked to strategy rather than technology curiosity. Treat PoCs as disciplined feasibility tests, not open‑ended explorations.

- Standardise stage gates and criteria: Define what “good enough to proceed” means at each stage: data quality thresholds, KPI improvements, risk assessments, stakeholder buy‑in, and architecture readiness. Make these criteria transparent so teams know how to design their work to progress.

- Invest in the boring plumbing: Reusable data pipelines, environment templates, CI/CD for models, AI/ML Ops, and common observability patterns dramatically shorten the path from pilot to production. This is the unglamorous infrastructure that differentiates organisations that scale AI from those that stay stuck in prototype land.

- Tie into AI governance, not around it: Your PoC‑to‑prod pipeline should be tightly coupled with AI risk, ethics, and compliance processes, including privacy impact assessments, model documentation, and approval workflows. AI Governance works best when it is embedded into the delivery pipeline rather than bolted on as a final gate.

- Treat AI as ongoing change, not a one‑off project: Handover to operations must include training, updated runbooks, communication plans, and defined ownership for monitoring and improvement. This aligns with the broader reality that AI adoption is fundamentally a change management exercise.

What this means for your AI strategy

For executives, the question is no longer “Do we have AI pilots?” but “Do we have a reliable, governable pathway from proof of concept to production that we can trust with core business processes?” The organisations that answer yes will compound their learning, build trust with regulators and customers, and avoid the trap of impressive demos with negligible impact.

If you already have clusters of AI activity, the next step is to make the implicit explicit: map your current idea‑to‑implementation journey, agree the stage gates, and deliberately wire in governance, observability, and operations. From there you can start to treat your PoC‑to‑production pipeline as a strategic asset in its own right.