People keep asking me: “What do we actually do about AI governance?”

Everyone has a framework, a model, or a glossy diagram. But if you sit in a real organisation, with messy legacy systems, half‑finished data lakes, and vendors knocking on your door with “AI‑powered” everything, the question is much more basic: how do we make sure this stuff is safe, lawful, useful, and aligned with what we’re here to do?

In other words, how do we govern it?

Start with why: AI rides on data

I’ve said for years that data governance is the foundation for information and cyber security. With the advent of popular AI, that foundation suddenly matters a whole lot more.

Most of the AI governance conversation skips past this and goes straight to model cards, algorithmic audits, or “responsible AI” principles. That’s all important, but if you don’t know where your data is, who has access to it, and how well it’s protected, you’re building AI on sand.

Before you get too excited about agents and copilots, you still need to be able to answer the big five data questions:

- Do you know the value of your data?

- Do you know who has access to your data?

- Do you know where your data is?

- Do you know who is protecting your data?

- Do you know how well your data is protected?

If you can’t answer those, your first AI governance task is actually to get serious about data governance.

From principles to practice

Most organisations now have a set of AI principles floating around somewhere: fairness, accountability, transparency, human‑centricity, and so on. They look lovely on a slide. The trouble is that they often stop there.

Effective AI governance is about making those principles bite in practice. That means, at a minimum:

- Translating high‑level principles into concrete controls and decision rights.

- Being clear about who can approve what: use cases, models, vendors, data sets.

- Embedding checks into existing processes rather than creating another parallel bureaucracy.

Think about how you already govern information security, privacy, and risk. You probably have risk registers, assurance processes, internal audit, and board reporting. AI governance should plug into those, not sit off to the side as a shiny new thing.

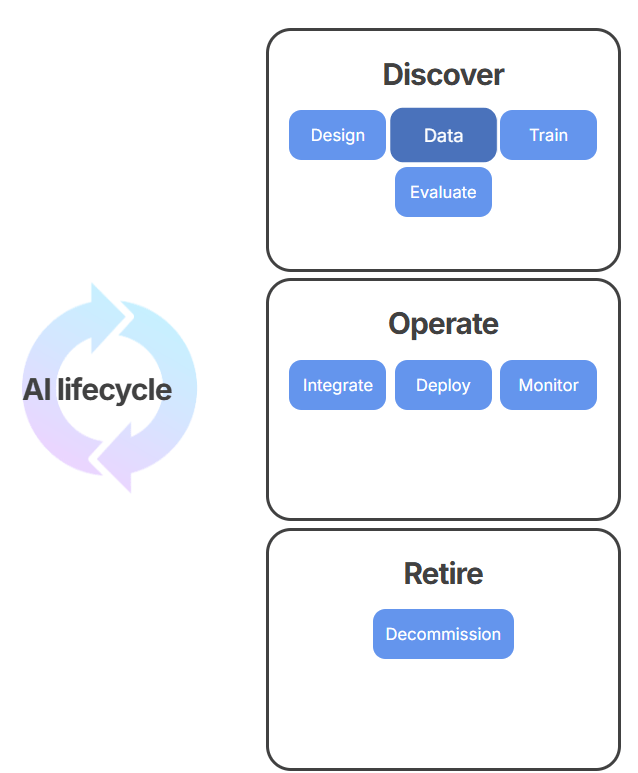

An example I’ve seen work is treating AI systems much like any other critical system: you require a business owner, a technical owner, a risk assessment, and a clear lifecycle from experiment through to production and retirement. The difference is that for AI you explicitly look at things like data provenance, model behaviour, and human‑in‑the‑loop controls.

Safe, secure, lawful – in that order

In my podcast conversations on AI governance, a recurring theme is that we need AI systems that are safe, secure, and lawful – and in that order. It sounds obvious, but if you look at how many organisations are currently adopting AI, usefulness and speed tend to come first, and everything else is an afterthought.

So when you’re designing AI governance, you need to build in questions like:

- Safe: What harm could this system do, and to whom? What are the plausible failure modes?

- Secure: What new attack surfaces are we opening up? How does this interact with our existing cyber posture?

- Lawful: Which regulations apply (privacy, discrimination, sector‑specific rules, emerging AI laws), and how are we demonstrating compliance?

Regulators globally are still catching up – the EU AI Act is a good example of a fast‑moving landscape – but organisations cannot wait for perfect guidance. Governance is about making defensible decisions now, under uncertainty, and being able to show your working later.

A good place to start is to read the ISO 42001 Artificial intelligence – Management system or the Australian government’s recently released AI technical standard (where they even outline a nice AI lifecycle model).

Humans in the loop (for real)

One of the more comforting phrases in AI policy documents is “meaningful human oversight”. It suggests that somewhere, a wise and alert human is carefully monitoring what the AI does. In reality, we’re often giving tired, overworked people a “review” step in a process and pretending that’s enough.

If you want human oversight to be meaningful rather than decorative, ask:

- Does the human understand how the AI is being used and what it’s good or bad at?

- Can they realistically intervene, or are they just clicking “approve” to get through their workload?

- Do they have the authority to override the AI, and is that culturally acceptable?

This is where education and literacy come in. We’ve spent years educating people in data science and analytics, but we’ve neglected the data and AI leadership piece. Leaders need enough understanding to ask sensible questions, push back when needed, and sponsor better ways of working.

The organisational plumbing

AI governance is not just about models and policies; it’s organisational plumbing. It’s about how things actually move through your system.

Some practical elements that matter:

- Clear ownership: Who owns AI at the executive level? Is it scattered across IT, data, risk, and marketing, or is there a coherent view?

- AI strategy: Does your organisation have a clear AI strategy or are you still looking at it as a tactical tool?

- Integrated processes: Does AI feature in your project gating, vendor procurement, and change management processes, or is it sneaking in via shadow IT?

- Lifecycle thinking: Are you governing AI experiments differently from production systems, and do you know when something has quietly become “business critical”?

When we built out data governance at UNSW, we discovered that getting the right people in the room – data owners, security, legal, business stakeholders – was half the battle. The same is true for AI governance. It’s inherently interdisciplinary; no single function can own it end‑to‑end.

Talent, capacity, and the boring work

There’s a hard reality here: effective AI governance requires people who know what they’re doing, and right now there are not enough of them. It’s the same story we’ve seen with data governance and cyber security.

You need people who can:

- Understand the technology well enough to spot nonsense.

- Understand the regulatory landscape well enough to know when to pick up the phone to legal.

- Navigate organisational politics well enough to get things done.

This is partly why I’ve been building courses on data governance and AI for organisational innovation – we need to equip leaders with practical tools, not just scare them with horror stories. AI governance will not succeed if it is seen solely as a compliance burden; it has to be framed as enabling safe innovation.

And yes, a lot of this work is boring: cataloguing data, mapping processes, writing down decisions, chasing up approvals. But boring is where resilience lives.

Where to start

If all of this feels overwhelming, here’s a simple way to begin:

- Take stock of where AI is already in your organisation – formally and informally.

- Make sure your data governance basics are in place; revisit those five questions.

- Identify a small number of high‑impact AI use cases and run them through a lightweight governance process as a pilot.

- Use the lessons from that pilot to refine your policies, roles, and workflows.

- Educate your leaders and teams continuously; this space is not going to stand still.

Effective AI governance is not a one‑off project. It’s an ongoing capability, built on the unglamorous disciplines of data governance, cyber security, risk management, and sensible leadership.

If we get that right, we can spend less time reacting to crises and more time using AI to actually create value.