The hype cycle and AI: why we need to stay grounded

Generative AI has hit the peak of inflated expectations. The Gartner Hype Cycle reminds us we've been here before - and shows why governance, not hype, and doing the work still matters.

Every few years, a new technology arrives wrapped in the language of inevitability. It will transform everything. It will disrupt every industry. It will redefine what it means to be human, to work, to create. Right now, that technology is artificial intelligence.

And while the capabilities of contemporary AI systems are genuinely impressive, the surrounding discourse feels oddly familiar. For those of us who have lived through multiple waves of technological change, from the early internet to big data to blockchain, the current moment has a distinct sense of déjà vu.

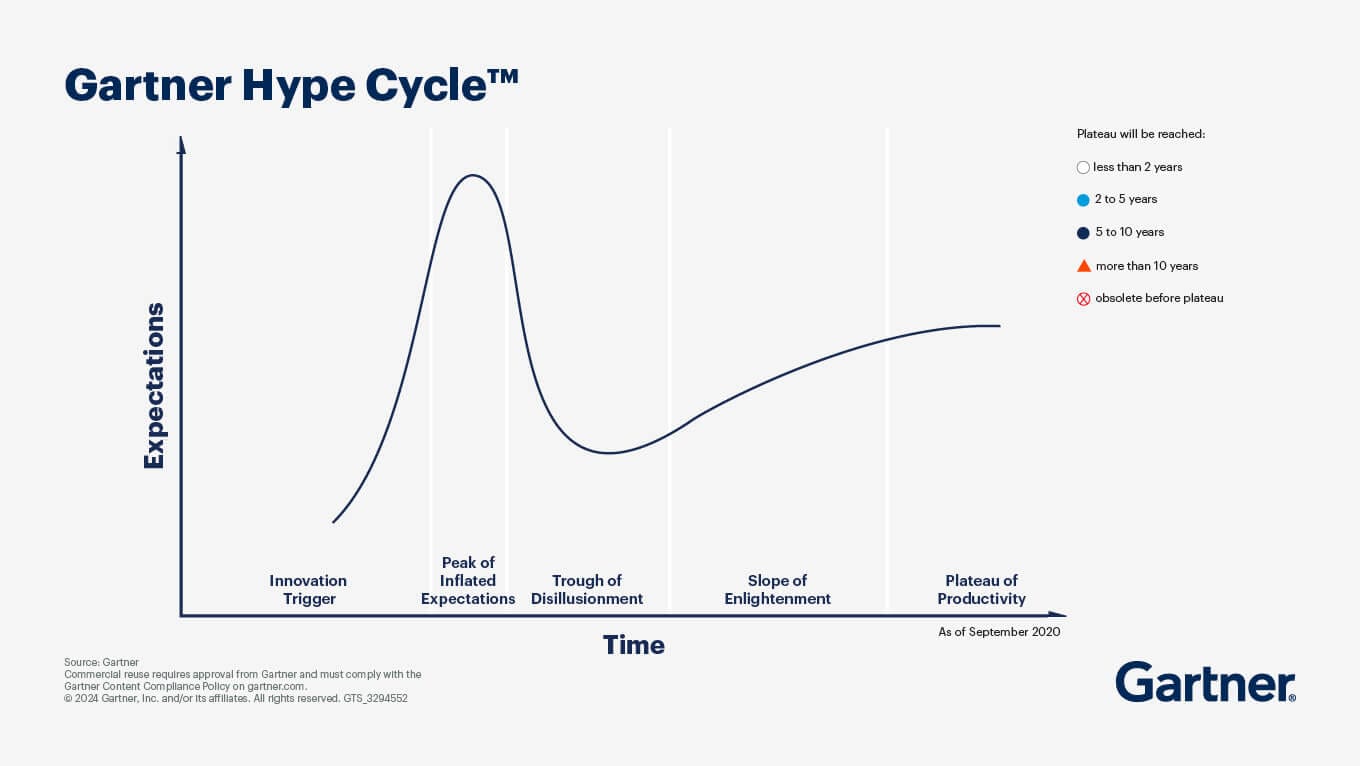

This is where the Gartner Hype Cycle becomes a useful lens.

Understanding the hype cycle

The Gartner Hype Cycle is a simple but powerful model that describes how new technologies are typically adopted. It maps a predictable pattern of collective enthusiasm, disappointment, and eventual maturity.

The stages are well known:

- Innovation trigger: A breakthrough or new idea captures attention

- Peak of inflated expectations: Hype surges; expectations become unrealistic

- Trough of disillusionment: Failures and limitations emerge; interest wanes

- Slope of enlightenment: Practical use cases begin to take shape

- Plateau of productivity: The technology becomes stable, useful, and embedded

Importantly, the Hype Cycle is not about whether a technology will succeed. It is about how our expectations overshoot reality before settling into something more sustainable.

AI and the peak of inflated expectations

Generative AI, particularly large language models, has clearly reached the peak of inflated expectations. According to Gartner's 2025 analysis, GenAI is now sliding into the trough of disillusionment as organisations shift focus from undifferentiated enthusiasm to the foundational technologies necessary for sustainable, scalable AI delivery.

We are seeing claims that AI will:

- Replace large portions of the workforce within a few years

- Render higher education obsolete

- Solve complex societal problems with minimal human intervention

- Achieve forms of general intelligence in the near term

At the same time, organisations are rushing to "AI-enable" everything, often without a clear understanding of the problem they are trying to solve.

This is classic hype cycle behaviour.

I often cite Amara's law in respect of technology innovation: “We tend to overestimate the effect of a technology in the short run and underestimate the effect in the long run.”

There is a tendency to mistake rapid capability improvements for inevitability of outcomes. But capability does not equal impact. Impact depends on integration, governance, human systems, incentives, and institutional trust, all of which evolve far more slowly than technology.

We have been here before

The current AI moment echoes earlier waves:

- The dot-com boom promised a complete reinvention of commerce; it delivered transformation, but only after a crash and consolidation

- Big data was framed as a universal decision-making engine; in reality, data quality, governance, and organisational culture proved far more decisive

- Blockchain was expected to decentralise everything; instead, it found more limited but still meaningful applications

In each case, the underlying technology did matter. But it did not unfold in the way the hype suggested. The pattern is not failure. It is recalibration, and it is waiting for the people AND the technology AND the underlying infrastructure to catch up with each other.

Why this matters for AI governance

From an AI governance perspective, the hype cycle is more than an interesting model. It is a warning.

Periods of inflated expectations tend to produce:

- Overinvestment in poorly defined use cases

- Underestimation of risks and externalities

- Weak governance frameworks rushed into place

- Policy responses driven by fear or hype rather than evidence

This creates a paradox. At precisely the moment when careful, thoughtful governance is most needed, the surrounding discourse becomes least conducive to it. If we assume that AI will immediately transform everything, we risk building regulatory and organisational responses that are brittle, reactive, and misaligned with reality. Conversely, if we dismiss AI entirely as "just hype," we risk missing the genuine, longer-term transformation that will emerge during the slope of enlightenment and plateau phases.

What matters now

Gartner's 2026 analysis shows a clear shift in priorities. The focus is moving from GenAI hype to foundational enablers: AI engineering, ModelOps, AI-ready data, and governance frameworks. These are the real bottlenecks.

Research shows that 99% of leaders report using AI in operations, yet 47% don't have AI policies in place. That trust gap slows scaling and invites risk.

So what does it mean to take the hype cycle seriously? It means holding two ideas at once:

- AI is genuinely important and will have lasting impact

- The current narrative about AI is likely overstated and temporally distorted

Practically, this suggests a different posture:

- Focus on use cases, not abstractions. Where does AI actually create value in specific contexts?

- Invest in data governance and organisational capability. These are the real bottlenecks.

- Design for human AI collaboration, not full automation fantasies.

- Expect a period of disillusionment. Plan for it rather than being surprised by it.

Resist both utopian and dystopian extremes. Neither is a useful guide for decision-making.

In other words, treat AI as a technology, powerful, evolving, but ultimately embedded in human systems, rather than as an unstoppable force with its own trajectory.

A familiar cycle, a different responsibility

The hype cycle reminds us that while technologies change, human behaviour does not change nearly as quickly.

We get excited > We overestimate > We correct > We learn (rinse and repeat).

What is different this time is not the existence of hype, but the scale and speed at which it propagates. Social media, venture capital dynamics, and geopolitical competition amplify the cycle in ways that make it more intense and more consequential.

For those working in AI, policy, and governance, the task is not to dampen innovation, but to remain grounded while others are swept up in the moment. Because we have, indeed, done this before. And if we pay attention, we might navigate it a little more wisely this time.

Where do you sit on the hype cycle?

How is your organisation approaching AI? Are you caught up in inflated expectations, or building the governance foundations that will matter in the long run?

Many of us are working with organisations that are navigating AI adoption, helping build governance frameworks that are practical, scalable, and aligned with real-world use cases rather than hype-driven timelines. Many of us are wrestling with how to move from AI enthusiasm to sustainable implementation. We are not alone and sharing our journeys may help others.

Don't forget to subscribe to my newsletter for regular insights on AI governance, data strategy, and innovation policy.